High disk I/O is one of the most common and most misunderstood causes of slow Linux servers.

This guide is written the way real incidents are handled — from symptom to root cause, with clear interpretation at every step.

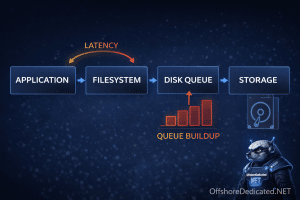

What “High Disk I/O” Actually Means

It does NOT just mean “disk is busy”.

It can mean:

- Too many reads/writes

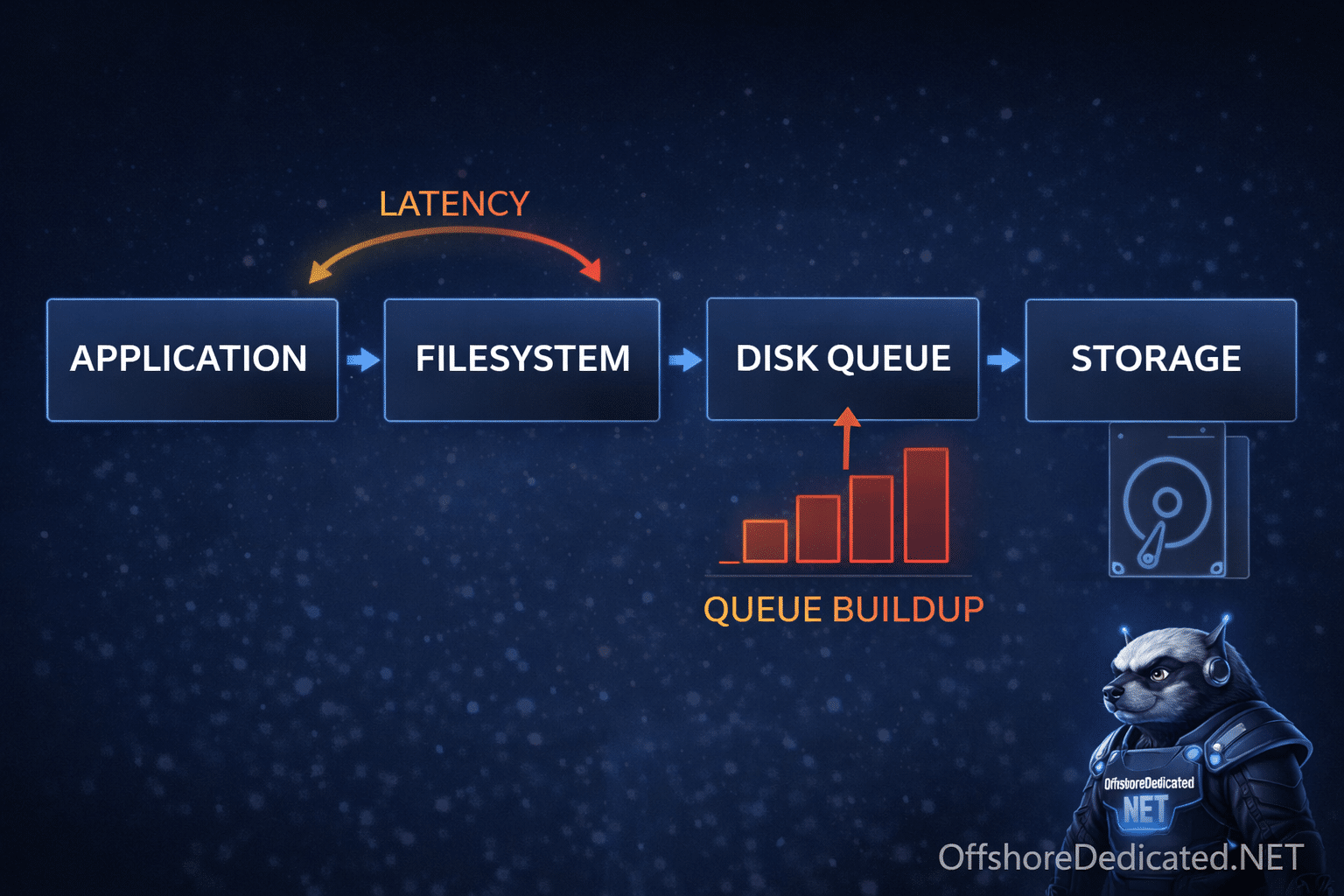

- Slow storage latency

- Queue buildup (requests waiting)

- Blocking processes (D-state)

👉 Your goal is to identify which one.

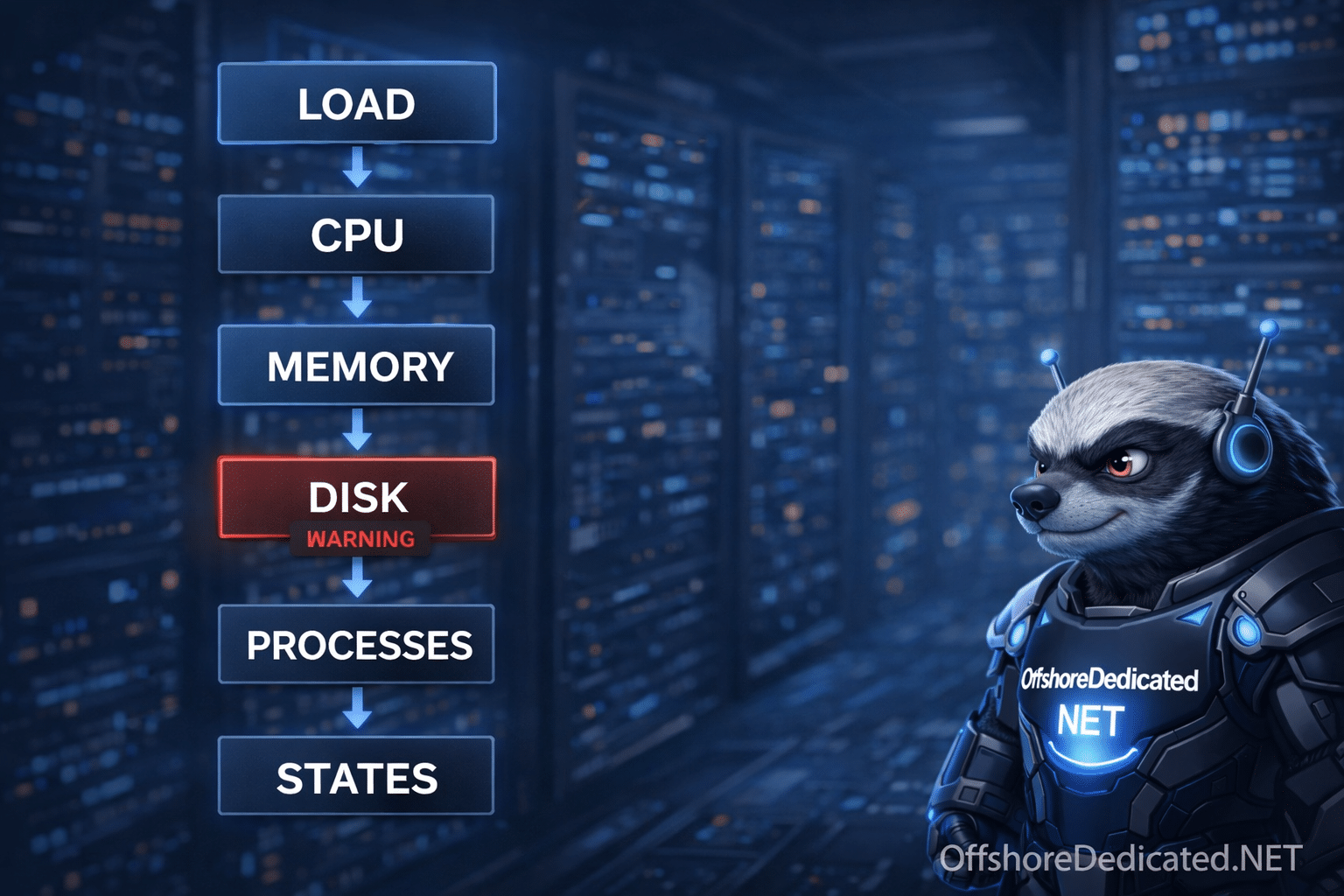

Step 0: Confirm the Symptom (from workflow)

Usually you arrive here after:

- High load

- Low CPU usage

- Slow responses

Step 1: iostat (Primary Tool)

Install if needed:

# Linux (RHEL/CentOS)

yum install sysstat -y

# Linux (Debian/Ubuntu)

apt install sysstat -yRun:

iostat -x 1Key Columns (MUST understand)

| Field | Meaning |

|---|---|

| %util | Disk usage percentage |

| await | Average wait time (ms) |

| r/s, w/s | Reads/Writes per second |

| avgqu-sz | Queue size |

How to Interpret iostat (THIS is where expertise is)

Case A: %util ~100%

Disk is saturated

Case B: High await (>50ms HDD, >10ms SSD)

Latency problem

Case C: High avgqu-sz

Requests are piling up (queue bottleneck)

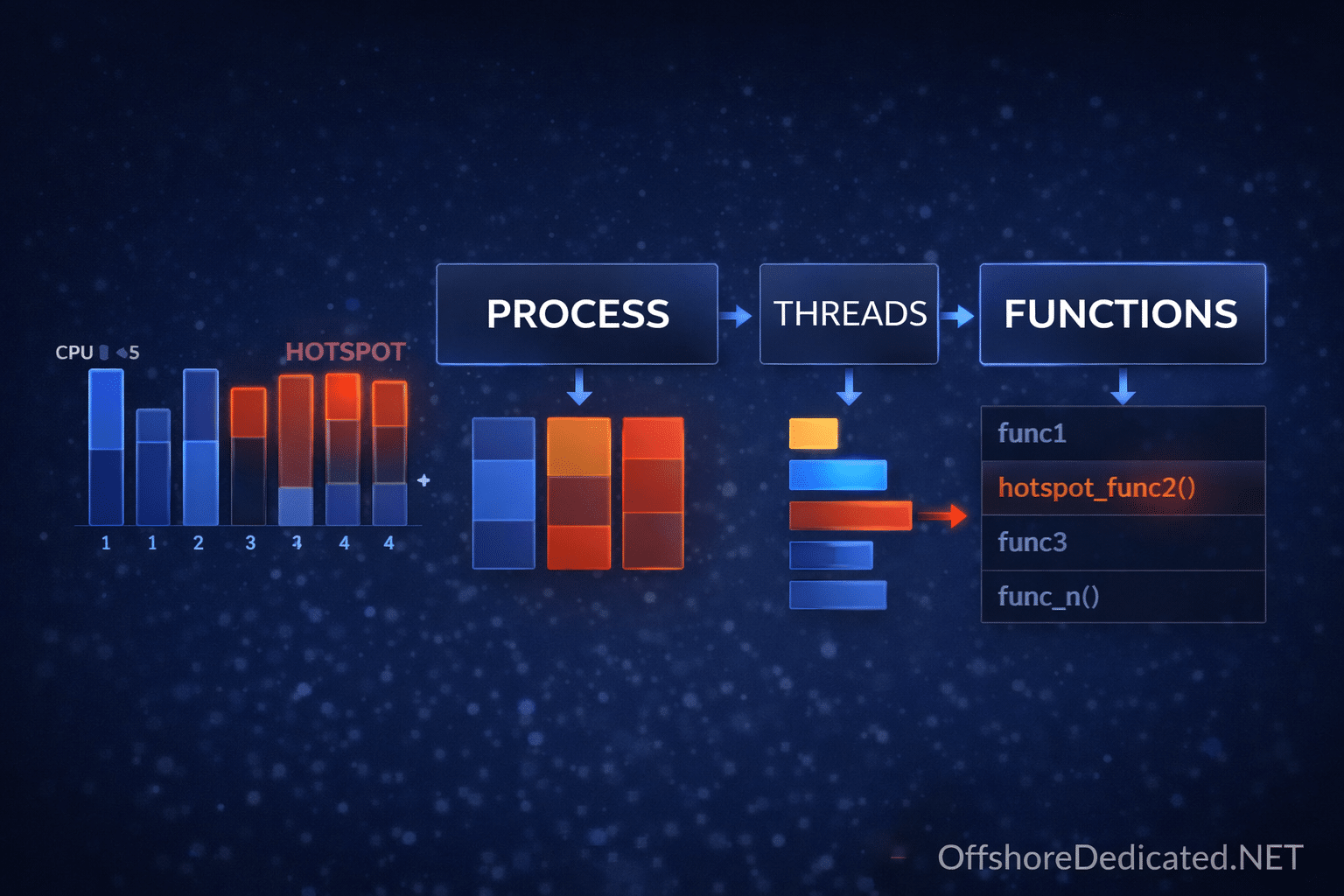

Step 2: iotop (Who is Causing I/O)

iotopNote: Requires root privileges on Linux. On macOS, iotop is not native.

Look for:

- High read/write processes

Step 3: vmstat (System View)

vmstat 1Look at:

wa→ I/O wait

Interpretation

- High

wa→ CPU waiting on disk

Step 4: Check D-State Processes

ps -eo pid,stat,cmd | grep DConfirms blocking I/O

Step 5: Identify Files Causing I/O

lsof -p PIDLook for:

- Logs

- Databases

- Temporary files

Real Production Case (Step-by-Step)

Situation

- Website slow

- Load = 15

- CPU idle high

Investigation

iostat→ %util = 100%vmstat→ wa highiotop→ MySQL heavy writes

Root Cause

Database writing too frequently to disk

Fix Options

- Optimize queries

- Add caching

- Move to faster storage (SSD/NVMe)

Common Causes of High Disk I/O

- Database overload

- Log files growing rapidly

- Backup processes

- Malware / abuse scripts

- Slow or failing disks

Time-Based Thinking

Sudden spike

- Traffic surge

- Backup job started

Gradual increase

- Database growth

- Log accumulation

Common Mistakes

❌ Looking only at %util

Latency (await) is equally important

❌ Killing processes blindly

Fix cause, not symptom

When This Matters in Production

Disk I/O issues affect:

- Databases

- File servers

- Streaming workloads

Infrastructure options:

Related Linux Guides

- How to Investigate a Slow Linux Server

- Linux Process States Explained

- How to Monitor Processes with htop

Final Takeaway

High disk I/O is not just a metric.

It is a signal of system pressure.

An expert does not just see 100% usage —

👉 They understand why the disk is struggling.