Table of Contents

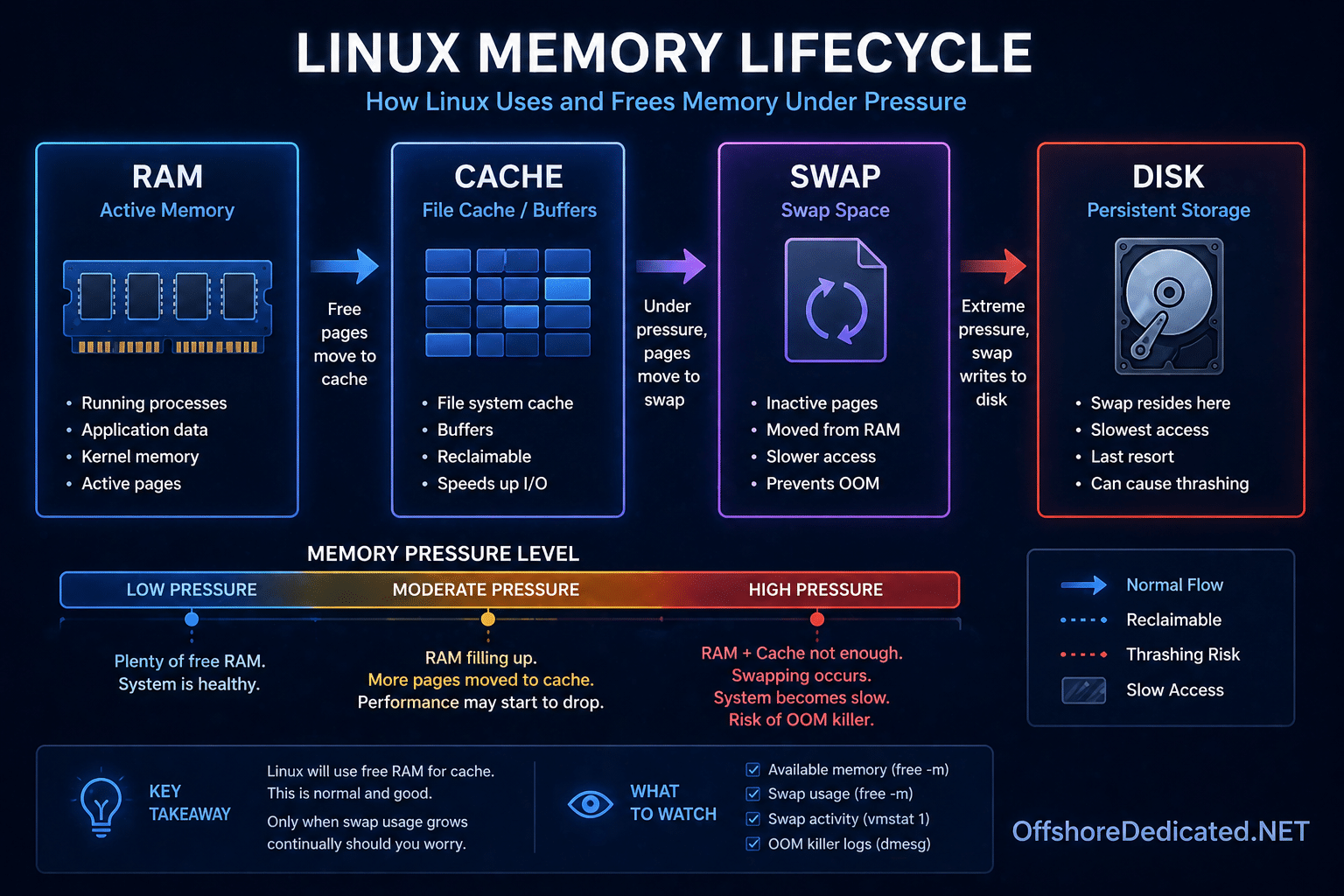

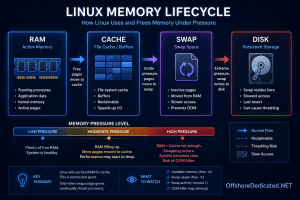

ToggleMemory issues are among the most misunderstood problems in Linux systems.

Most people run:

free -m…and immediately draw the wrong conclusion.

This guide will teach you how real system administrators diagnose memory pressure — not just reading numbers, but understanding how Linux actually uses memory under load.

Why “Used Memory” Is a Misleading Metric

Linux aggressively uses memory for:

- File cache

- Buffers

- Disk acceleration

👉 So high “used memory” is often a good thing, not a problem.

Step 1: Use free -m Correctly

free -mExample:

total used free shared buff/cache available

Mem: 8000 7200 200 100 4600 3000

Swap: 2000 100 1900What Actually Matters

👉 available memory — NOT used

Interpretation

Healthy System

- Available memory is sufficient

- Swap usage is low

👉 No real pressure

Warning State ⚠️

- Available memory low

- Swap usage increasing

👉 Memory pressure starting

Critical State 🚨

- Available memory near zero

- Swap heavily used

👉 System is under serious stress

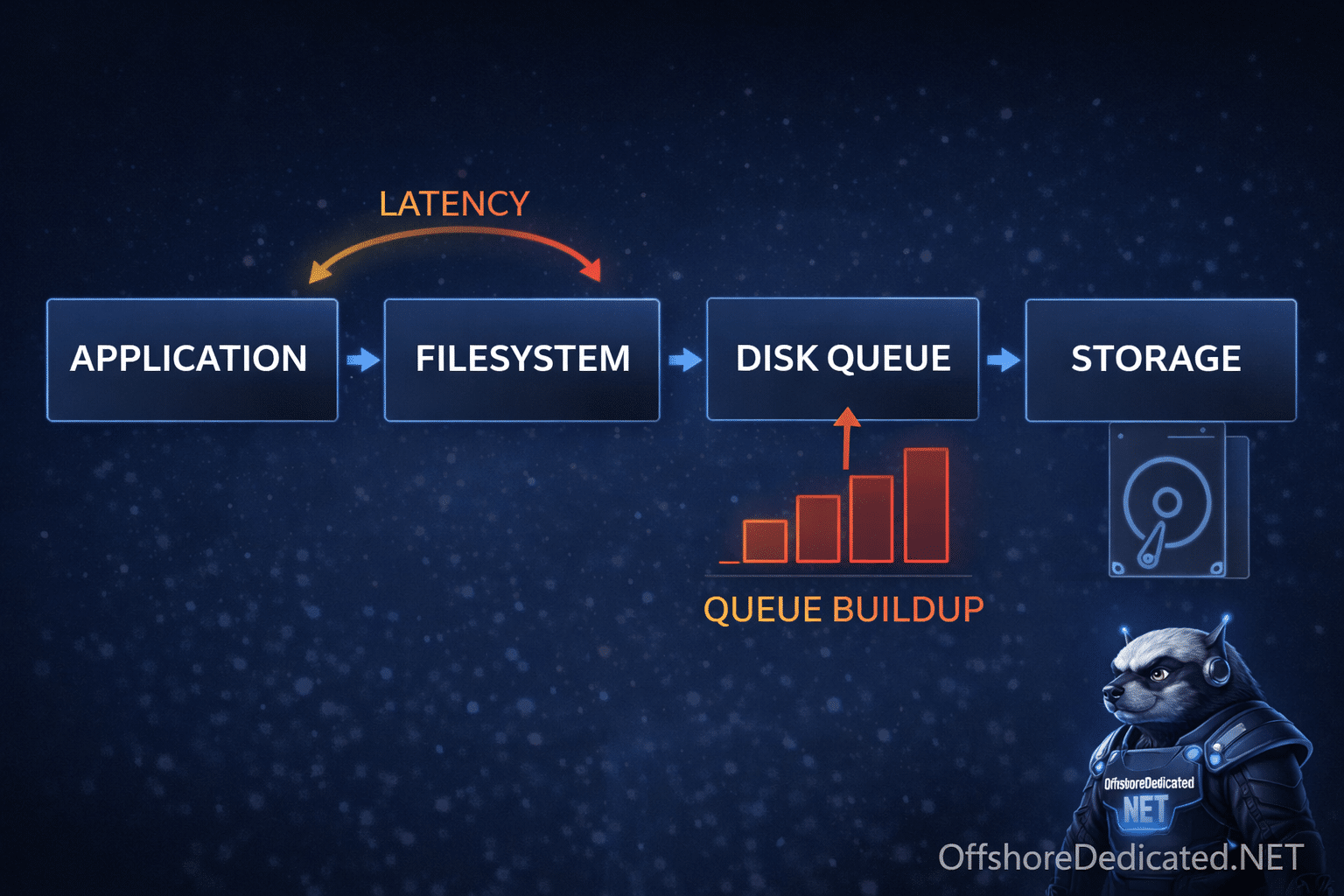

Step 2: Check Memory Behavior Over Time

vmstat 1Key Columns

| Column | Meaning |

|---|---|

| si | Swap in |

| si | Swap out |

| free | Free memory |

| wa | I/O wait |

Interpretation

Case A: si/so = 0

👉 No swapping → healthy

Case B: si/so increasing ⚠️

👉 Active swapping → slowdown begins

Case C: continuous swapping 🚨

👉 System thrashing → severe performance degradation

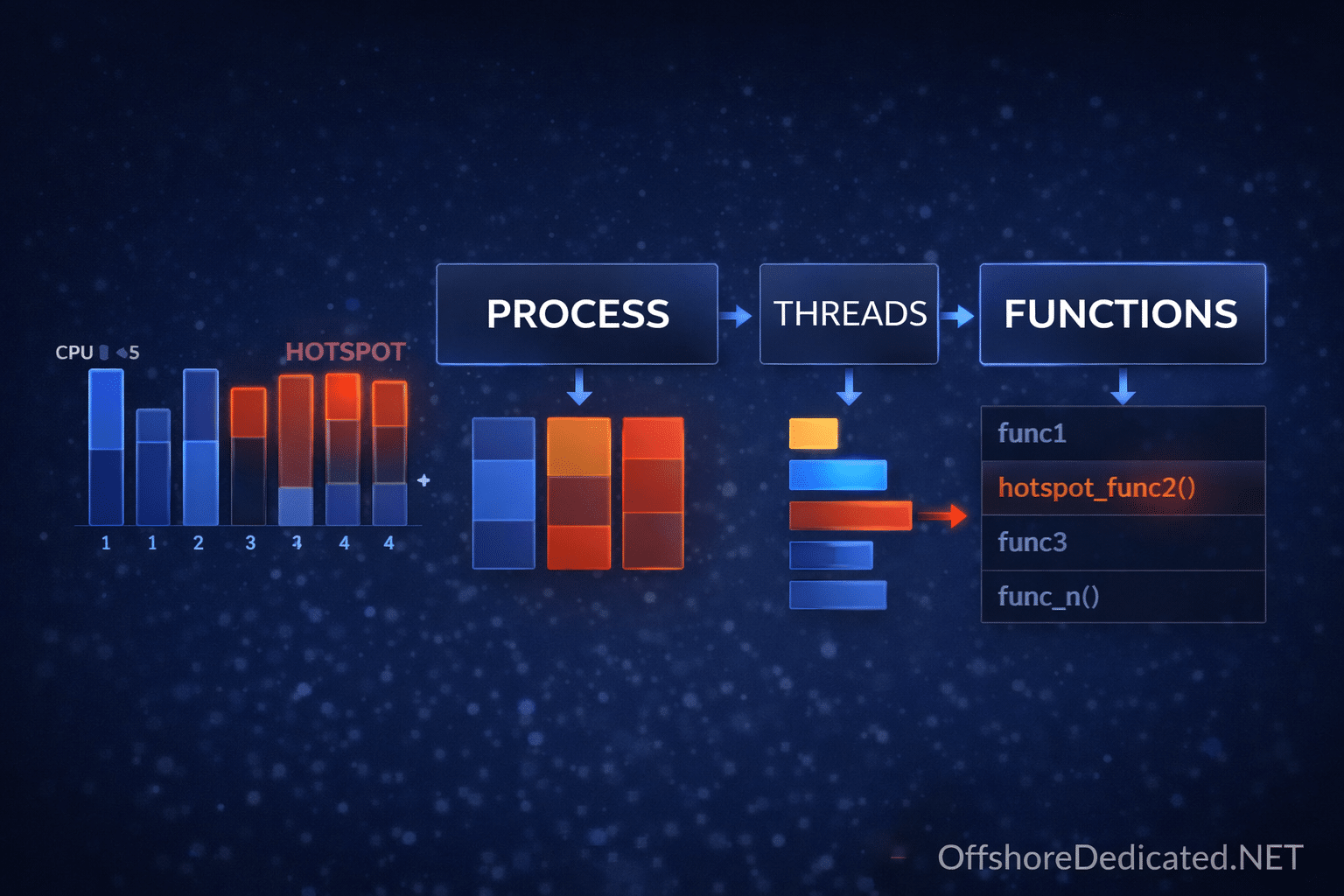

Step 3: Check Processes Consuming Memory

htopKeys (Linux / macOS / Windows via SSH)

- Sort by memory:

F6 - Tree view:

F5 - Filter:

F4

Mac users: use

Fn + F6if needed

What Experts Look For

- One process consuming large memory

- Gradually increasing memory usage (leak)

- Many processes each using moderate memory

Step 4: Detect Memory Leaks

Run:

ps -eo pid,cmd,%mem --sort=-%mem | headWhat to Watch

- Same process increasing over time

- Memory not released

👉 Classic sign of memory leak

Step 5: Understand Cache vs Real Usage

Linux uses cache to speed up disk operations.

👉 This memory is reclaimable

Quick Test (Optional)

sync; echo 3 > /proc/sys/vm/drop_caches⚠️ Do NOT use this in production unnecessarily.

Step 6: Check OOM Killer (Critical Insight)

dmesg | grep -i killIf you see:

Out of memory: Kill process 1234👉 System ran out of RAM and killed processes

Step 7: Swap Analysis

swapon --showInterpretation

- Swap used lightly → OK

- Swap heavily used → pressure

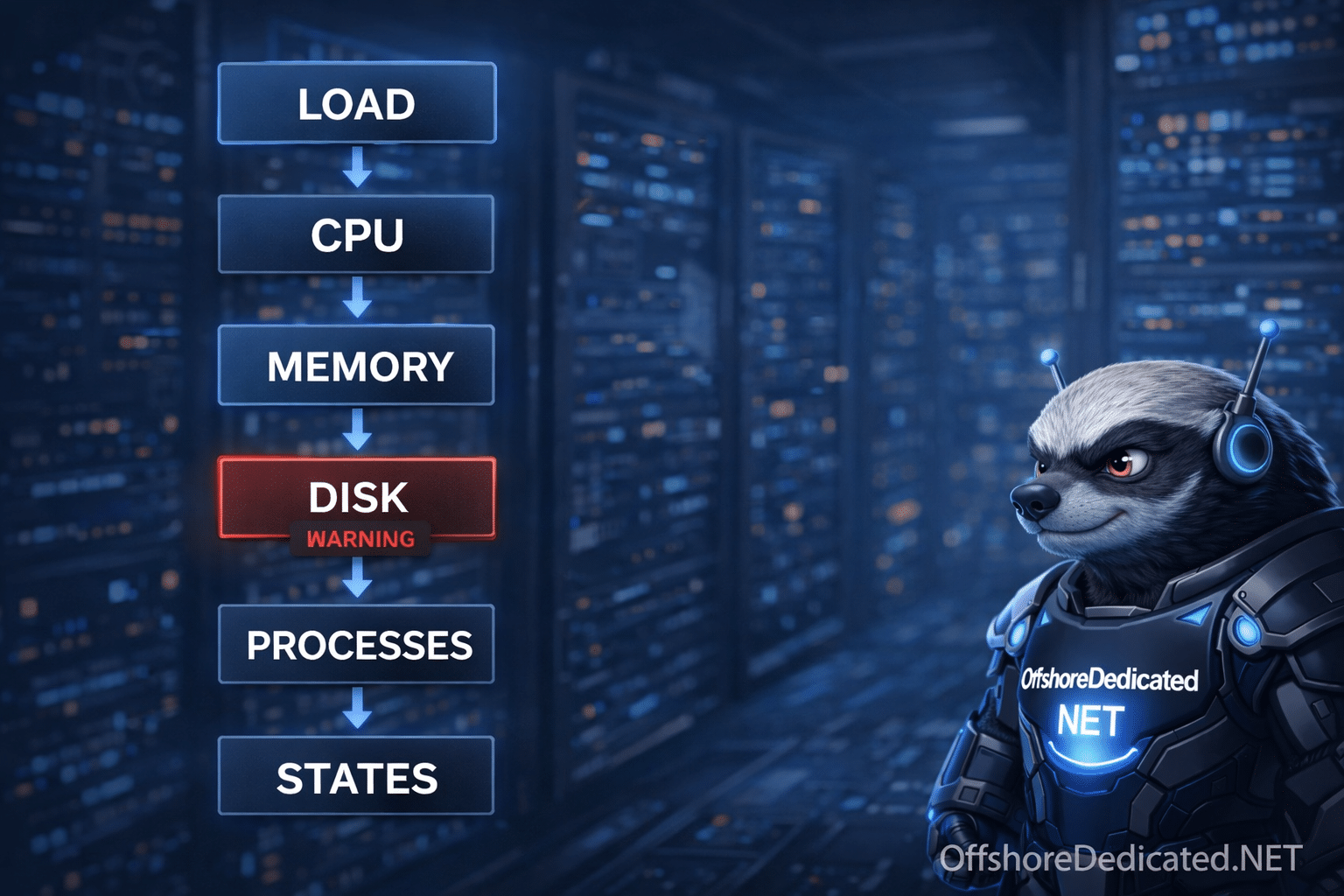

Real Production Case (End-to-End)

Situation

- Website slow

- Random crashes

Step 1: free -m

available = very low

swap used = highStep 2: vmstat

si/so constantly increasingStep 3: htop

- Node.js process increasing memory continuously

Diagnosis

👉 Memory leak in application

👉 System forced to swap

👉 Performance degraded

Fix

- Restart service

- Fix memory leak

- Increase RAM

- Add monitoring

Time-Based Thinking (Expert Mindset)

Sudden memory spike

- Traffic surge

- Deployment bug

Gradual increase

- Memory leak

- Cache growth

- Data accumulation

Practical Thresholds

| Metric | Healthy | Warning |

| Available Memory | >20% | <10% |

| Swap Usage | Minimal | Increasing |

| Swap Activity | 0 | Continuous |

Common Mistakes

❌ “Used memory is high → problem”

Wrong. Linux uses memory efficiently.

❌ Ignoring swap activity

Swap behavior tells the real story.

❌ Restarting services blindly

Fix the root cause, not symptoms.

When This Matters in Production

Memory pressure directly affects:

- Databases

- APIs

- Web servers

- Background workers

👉 Infrastructure options:

- Offshore Cloud Servers

- Offshore Dedicated Servers

- Offshore Streaming Servers

- Offshore Bandwidth Commit Servers

Final Takeaway

Memory issues are not about numbers.

They are about behavior over time.

An expert does not ask:

👉 “How much memory is used?”

They ask:

👉 “Is the system under memory pressure?”

That is the difference between guessing and diagnosing.